The Philosophy of Moon

Unlike most science-fictions, Moon (2009) is a quietly disturbing film. Through the crisis of one man we are asked to ponder some deep philosophical questions, not just about him but about ourselves—all without being bombarded with unnecessary action.

Sam Bell’s reality is shaken to its core in Moon. Instead of disregarding an event as a glitch and moving on, Sam follows the scent of suspicion to agitate and uncover something bleak. He marches out to the Moon’s silent and eerie surface and uproots it—for the sake of truth.

Uncomfortable and confounded by the answers he receives, bothered by his subsequent physical and mental decay, we can only watch on and ask: ‘Wasn’t darkness better than the truth?’

Come hither to understand Sam’s journey. For don’t be too sure that you wouldn’t have done the same.

(Warning: The rest of this article contains big spoilers! Watch the movie if you have not done so already then come back. If you have seen it and you remember the events, you can skip forward to the philosophical analysis.)

The plot

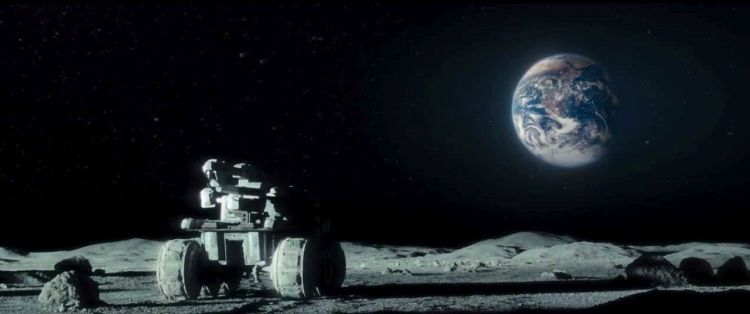

Sam Bell (Sam Rockwell) is a lone worker for Lunar Enterprises on the far side on the Moon. He is based on the Sarang Station, from where he routinely drives out on a rover to the Moon’s surface to mine helium-3, 3He, which is shipped to Earth to be used as an energy source.

Sam is nearing the end of his three-year mission, during which he has had one companion, a robot named GERTY (voiced by Kevin Spacey). Sam’s loneliness is evident. Due to damage caused by solar storms to communications equipment he has no way of directly contacting people on Earth. (Light only takes 1.3 seconds to travel from the Moon to Earth; this delay wouldn’t significantly hamper a live feed.) As such, Sam cannot converse with his wife, Tess, and his daughter, Eve, who are becoming increasingly distant to him.

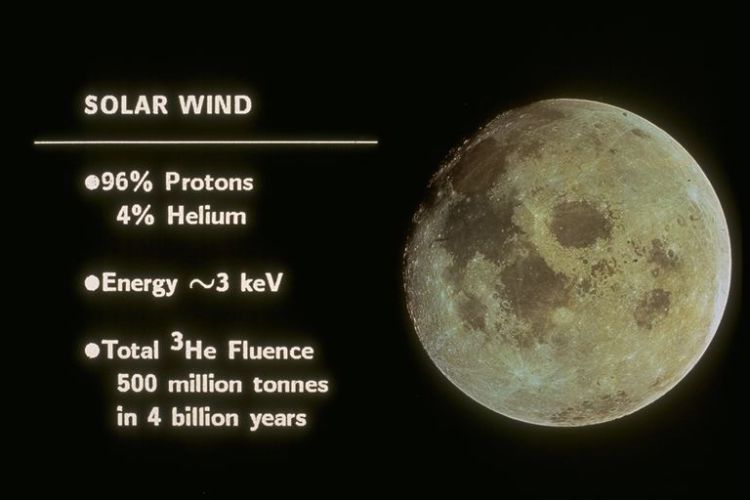

Solar wind — The Moon doesn’t have an atmosphere or a geodynamo-generated magnetic field. It is therefore defenceless against solar winds, which deposit atomic nuclei such as 3He on its surface. 3He is a stable isotope of helium that contains one less neutron in its nucleus than regular helium, 4He, which is an abundant inert gas that makes up around a quarter of chemical elements in the Universe. 3He, in contrast, is relatively rare and holds the potential to be used as an efficient energy source in nuclear fusion. (Fusion Technology Institute)

Sam’s mission will finally be over in only two weeks’ time, when he can be reunited with Tess and Eve, even if, worryingly, he has not received any video messages from them in a while.

So close to returning home, Sam is showing signs of burnout from three years of solitude: he is physically and mentally exhausted, he is somewhat unhinged and regularly loses his temper, he experiences regular headaches, he increasingly makes mistakes at work, and he even looks ragged. Perhaps there are only so many old TV shows and sachets of beans one can consume.

More worrying are Sam’s apparent memory loss and hallucinations: symptoms of madness from isolation or something more sinister?

Out on the job and distracted by a hallucination Sam is involved in a terrible accident. He loses control of the rover and crashes it into a harvester, injuring himself and losing consciousness.

GERTY :’( — That GERTY is talking AI with a single-lens eye and a soothing voice is a welcome hat-tip to 2001: A Space Odyssey (1968)'s HAL 9000. Unlike the mean-spirited Hal 9000, though, GERTY is either programmed to have or develops ‘empathy’ for Sam, making more and more decisions in Sam’s best interests (disobeying Central’s orders). Can AI really exhibit empathy? Can it feel? In any case, GERTY is installed with emojis to express ‘emotion’ to fuse the interface between ‘man and machine’, between cells and code.

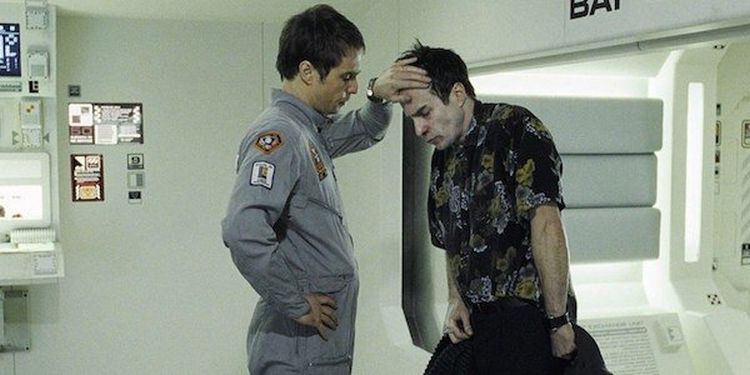

A healthier-looking Sam awakens back at the Sarang Station’s infirmary who is made to undergo a series of physical and cognitive tests. Everything is alright. However, soon the rejuvenated Sam becomes suspicious of some peculiar activity happening outside the station. He wants to investigate it and has to trick GERTY into allowing him to go.

Sam was right to be suspicious: pursuing whatever truth is at play here, he makes the unexpected discovery that someone else is out there. They are barely alive, lying in the front seat of the rover.

This is when we realise the cynical secret which has been staged and concealed by Lunar Industries all along: Sam is a clone. One Sam was in the front seat of the rover; another Sam rescued him. Both clones and other clones manage lunar operations consecutively, usually without ever meeting one another. When each Sam expires a new clone is activated. This new clone is told they have been involved in an accident to explain their presence in the infirmary and to subside the disorientation and confusion they are likely experiencing. This is sinister, indeed.

‘In many ways, this place is all about contradictions.’ — The creators of Moon (2009) cleverly ensure that the Moon appears familiar and that the Sarang Station feels unthreatening, with the clinical whiteness of its rooms and the digital soullessness of its environment. This gives us the impression that Sam is being well looked after. Yet there is an ulterior motive to which this faux innocence is juxtaposed: Sam Bell is being exploited by Lunar Industries. Or, rather, whatever version of Sam is currently leading 3He-mining activities on is being exploited, this being just one case of it.

Hence ‘Sam 2’, the recently activated and fresher of the two Sams, has taken the place of Sam 1, who, by the company’s lights, is as good as dead: his body was not even recovered from the wreckage of the crash. Sam 2 is clear-minded and absent of injuries and quickly becomes aware of what is happening, albeit entrenched in gloom. The ill and injured Sam 1 is in denial.

What we witness in each of them are internal and external struggles: fights for Sam Bell within themselves and with each other. Even GERTY cannot seem to figure out that there are two Sams, treating the clones as indistinguishably one person.

Eventually, once Sam 1 has recuperated and acknowledged the injustice enacted upon them both, the Sams together face one serious existential issue: that there is an original Sam Bell somewhere who is neither of them and to whom their shared long-term memory belongs.

GERTY: They are memory implants, Sam. I’m very sorry.

Sam 1 becomes more-noticeably ill: one of his many problems right now. Sam 2 pities him and hatches a plan: he will kill the next clone and take the clone’s place; Sam 1 can then covertly return home and see their family first. He deserves as much because he has done his three years on the Sarang Station. He also already remains unaccounted for by Lunar Industries.

Physical and mental decay — Sam 1, throughout Moon (2009), slowly deteriorates, vomiting blood and losing his teeth, dignity, and motivation for escape. According to GERTY, clones can suffer from ‘genetic abnormalities’ and ‘minor duplication errors in the DNA’. Is the timing a coincidence? Probably not.

Cooperating, the Sams discover signal jammers which are blocking live communication—solar storms had nothing to do with it—and which formed part of a massive web of lies designed by Lunar Industries to take advantage one man’s identity multiple times for commercial gain.

Sam 1 begins to accept the fate of his expiry. There is nothing left for him on Earth, where he has never been. Nor is there anything meaningful for him to work for on the Moon, on whose back lies were built to exploit him. Who knows how long he has actually been there—probably not three years? The memory implants have blurred the line between reality and artificiality.

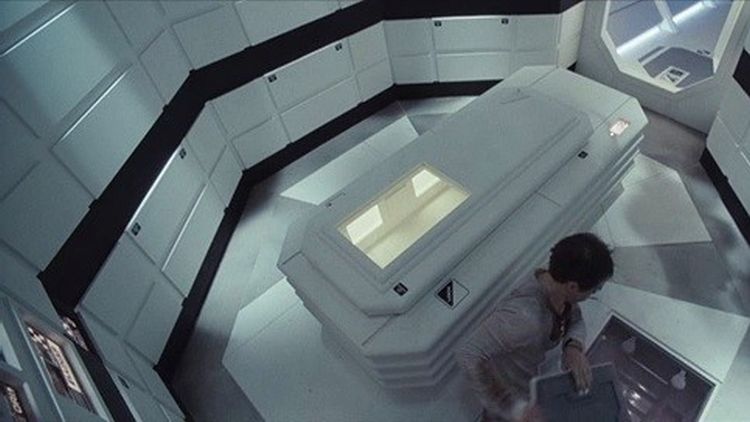

Yet there is still room for one more piece of bleakness in this story. Lunar Industries never intended for any Sam to return home. They just need each Sam to believe they will return home. This ensures that he will complete the work that is delegated to him. Once Sam follows his story’s arc to its end Lunar Industries murders him. He steps into a coffin-shaped cryogenic pod at his journey’s end to enter what he thinks will be a state of deep sleep for a three-day journey back to Earth, to enter the embracing arms of his family. Instead, each Sam will be nuked to ash—ruthlessly terminated—and replaced with another clone.

#FreeThe156 — In the spiritual sequel to Moon (2009), Mute (2018), we see footage of an ongoing legal battle between Lunar Industries and the clones of Sam Bell. There are 156 of them. (Netflix)

Nevertheless, these discoveries are not made in vain by Sam 2, who pursued truth to its nasty roots. He facilitates Sam 1’s peaceful death out on the Moon and manages to escape to Earth, all in Sam 1’s view. In the future we learn that Sam 2 manages to expose Lunar Industries’ egregious practices to the world, taking legal action and causing their stock to plummet. However, it is possible that the original Sam Bell was complicit in this whole saga: a bitter twist in the tale.

Sam’s plight—rather, Sams’ plights—can be read along three branches of the great tree of philosophy: ethics (cloning), metaphysics (personal identity), and existentialism (purpose). Let’s explore each of them.

Cloning

Creating sickness for a greater good—is this how we justify farming lame cattle, sickly pigs, and diseased hens to ourselves? Are we like Lunar Industries?

The narrative of Moon is imbued with moral questions. Specifically, Sam was clearly a maltreated product of cloning. But was his treatment justifiable in some way?

On the one hand, the events of Moon represent a case of nefarious, capitalistic exploitation. Lunar Industries was a corporation which privatised an area of the Moon and exploited the innocent senses of duty of workers without due regard to their welfare. Each Sam believed he was contracted to work for Lunar Industries for a set amount of time then return home. Yet he never signed a contract; he didn’t consent to work. Upon what premise, then, was Sam even there? Not Sam’s. With a nod to Orwellian deception, Lunar Industries cruelly deceived him. Sam was also prone to an early onset of illness.

On the other hand, Lunar Industries were achieving something ‘bigger’ than the concerns of ‘one man’. Through Sam’s output they were able to feed energy to many lives on Earth. A valuable supply of 3He was provided at the ‘cost’ of a few short-lived Sams: benefits realised through capitalistic means. On some account of utilitarianism, the energy generated presumably promoted human welfare. There was pleasure, good, happiness, and some such as a result of citizens having more electricity, possibly at a cheaper price. Furthermore, if Lunar Industries’ experiment went to plan and Sam never discovered the truth of his existence, thanks to human cloning, his life could have been a positive experience overall, filled with purpose in work and family, a life which was created multiple times ex nihilo—a gift of science.

By no means am I condoning this treatment of Sam. I’m just laying out the questions which are dangled in front of us during Moon. Similar events are already commonplace in real life, given our treatment of non-human animals, which are farmed and reared on alien lands for the good of human consumption, just like Sam is. People make moral arguments for that: citizens are fed, there is enjoyment from it, and so forth. So was Sam’s cloning and human cloning in general, in some sense, justifiable, too?

In Moon Sam’s cloning was unethical on a number of grounds. For one, he was deprived of basic human rights and knowledge of the full situation, undermining his dignity. He wasn’t able to claim a unique identity. He possessed minimal autonomy, as imposed on him by Lunar Industries, who lied to him and clearly did not value or respect his life. He was also caused harm, for example, by having no ‘real’ family. All in all, he was treated as a mere means to ends which were not his. And these arguments can be multiplied by however many Sam Bells there really were.

Lunar Industries were recklessly negligent and risk-assessed the situation improperly. Ultimately, many Sams underwent severe mental anguish and/or physical pain upon being ‘born’. Maybe if the outcomes were slightly different—say, Sam helped provide 3He to Earth, felt purpose for it, and remained naïve to the truth all long—Lunar Industries’ corruption may have been ‘offset’ by some good in the world, as per utilitarian thought and as per the mantra: ‘the ends justify the means’ (Niccolò Machiavelli). However, arguments for Sam’s exploitation and manipulation lack moral standing in a variety of philosophical positions, even in the case things were different.

Personal identity

The coffin that Sam unknowingly goes to die in. Sam may not be an original being but he is a being, possessing all the usual features of human existence: flesh, blood, self-awareness, rationality, personality, memories, joy and pain and an array of faculties to think and feel, and so forth. They give us something to protect in Sam with our ethics—workers’ rights, for instance, to protect his physical and mental health.

SAM 2: Okay, Sam. You’re not a clone.

Gaze at yourself—in the mirror, in a photo, or on video. Struck by self-alienation (Lacan), you see yourself represented externally, separated from where you gaze. Ask yourself afterwards: Who am I? Is that really me there?

Do you have an answer that you are confident in?

Now imagine how self-alienating Sam’s experiences were. He saw actual replications of himself and knew of more. Sam 1 and Sam 2—plus all the other Sams—used the same name. They were programmed by the same DNA, related to the same family. They housed the same memories; the very structures of subjectivity were duplicated and implanted multiple times between bodies. Basking in his unoriginality, he felt himself be reduced to utterly nobody. One person’s identity was disunified like a cluster bomb and each Sam wanted to fix it back.

These are gutting thoughts. Could Sam have taken a leaf out of Mewtwo’s book? In Pokémon: The First Movie (1998) Mewtwo declares:

In other words, could Sam have formed his own life? Each clone independently developed and became increasingly autonomous in time through his unique vantage point and his own continuous stream of consciousness: always in the process of forging new memories and personality traits experientially. But Sam needed something real and unique to hold onto. What was real and unique about him—is real and unique about you?

The question of personal identity is a matter of metaphysics, in which philosophers ask what the definition of a person is and how people persist over time. Some thinkers, such as John Locke, have argued that a person goes where their consciousness goes and consists in things like their memory of events. This view is a good candidate for Sam. However, it seems to imply something whacky for Sam Bell: that since, through their memory implants, each Sam projects their consciousness backwards to the same set of experiences, all the clones were the original Sam Bell, at least initially upon cloning, which is absurd. Granted, Locke would have claimed that there was only one ‘soul’ pertaining to the original Sam Bell.

Other thinkers have made use of stronger psychological criteria, using stronger notions than memory to define a person. But what features of their mental existences convincingly distinguish the Sams? They have the same personality and ways of thinking, for example. Others are inclined to use bodily criteria instead of psychological criteria to define a person. Thus Sam would be his own person by virtue of having his own physical body. But with this thesis there are several issues to contend with, such as the possible presence of two beings in one body once you account for the many mental aspects of existence.

Whatever way we go, Sam was a real person: his own being with his own identity. The point is that, by the definition of personal identity, there was only one original Sam Bell; and no other Sam in Moon was able to own the identity he fought for and assumed he had, even in theory, because he was cloned. This fact dawned on Sam 1 and Sam 2 separately, engendering internal battles about who they really were and external battles between themselves.

By having multiple Sams vying for the same identity in Moon we witness these philosophical issues surrounding personal identity in action. Naïve to the facts, each Sam at first assumed he was the original Sam Bell. His world was subsequently crushed by the knowledge that he was only connected to his world artificially: to Tess and Eve (and another daughter) he only biologically shared information (DNA), not personal experiences; in his job he never chose to be on the Moon; Lunar Industries made this decision and they directed his life there.

Only Sam 2 could survive the torture and grow from out from his existential pit in revenge against Lunar Industries to start his life again through a sense of justice. For Sam 1 and the other Sams it came down to this: Sam couldn’t define himself as he wanted to define himself: as the original Sam Bell.

Ownership of one’s identity is a personal and existential relationship which consists in pride of who one is, who they will become, and the free determination of themselves. Sam wanted to believe in and take responsibility of his own reality: to curate a narrative about himself based on what he knew from the past. But, ultimately, he couldn’t because he knew his life was faked. No matter how vivacious the memories he recalled from his implants felt he knew they weren’t his. Somebody else experienced those events. The power of self-ownership, therefore, was never conferred to Sam: parts of him already belonged to another being.

Purpose

To accept an opportunity to work on undisturbed terrain in space for the good of the humankind; to feel purpose from aiding a distant but familiar civilization from afar; to marvel at the Earth, the blue ethereal plane, quietly from above—who would say no to that? Well, the question presupposes that one has a choice …

As soon as Sam realised the truth of Lunar Industries’ malpractices and his existence his sense of identity was diluted. Clones shared responsibility for the good (and bad) work he did and they shared his family. But what if Sam never found out? Despite the void of truth his life was centred on Sam was propelled forwards by a project.

Prior to the events of Moon multiple Sams didn’t detect anything awry going on during their spells on the Sarang Station that caused them to lose faith in their missions. Every clone of Sam Bell woke up each day with a simple objective in his mind: complete your work so you can see your family again. What Sam’s memories lacked in originality they gained in vigour. They reminded him every day of the family he longed to see, maintaining his purpose.

To himself Sam could have owned this identity and had an individually felt purpose for it. So long as he didn’t uncover the truth, Sam was happy and probably fulfilled. In general, isn’t ignorance in life so often better? What are the limits?

Friedrich Nietzsche, amongst other philosophers, believed truth to be merely contingent and non-central to a life-affirming philosophy. As humans we seek truth. We do so because we possess the will to truth: we discard old values and replace them with new ones in light of what we think is the truth because we want the Truth.

Nietzsche encouraged us to rid of this impulse. False judgements can be significant. In fact (so to speak), false judgements can promote, preserve, and cultivate life, therefore affirming it. To seek truth for truth’s sake is a fool’s endeavour. The pursuit will outdo itself, paradoxically leading one who hunts truth via its mere scent to a lack of truth. Nietzsche applied this argument to morality in Europe, arguing that distinction between good and evil is unfounded and will be found out as such.

What does this all mean for Sam? A falsis principiis proficisci: Sam’s project proceeded from false principles and, beneath them, there were hidden horrors of truth that had great potential to hurt him. Yet Sam incessantly tracked them down anyway. He valued truth highly at the cost of his project’s meaning. We might ask, like Nietzsche: Why? To what end? So long as Sam was unaware that the premises for his being on the Moon were false, he had a set of life-affirming objectives to live by. He could have lived then died satisfied, knowing he was doing a magnificent job on the Moon. He wasn’t supposed to know how it would all end. Life could have been brief but still good, much like ours. Sometimes it’s best not to know the answers; and often answers aren’t there anyway.

But, given our instinct to hunt truth, for how long can an inquisitive human disregard suspicion and embed themselves in ignorance? In Sam’s case, it wasn’t long. Something was up and he felt compelled to conduct an investigation, simpliciter, regardless of the outcomes of his search and what they meant for him (the consequences were disastrous). Curiosity drives the human spirit towards truth—at least what ye ‘truth seekers’ think is the truth—displacing that which makes us happy in ignorance.

Could Sam have lived a fulfilling albeit short life on the Moon working for Lunar Industries in light of the truth? Perhaps Sam could have worked alongside the truth absurdly: concede that his life was meaningless and yet, notwithstanding the hollowing out of this life, push on with fulfilling acceptance, resigned to coexisting with his torturers but completing his daily tasks in order to defy them. (Isn’t this what working in the real world is like for most of us anyway, especially in retail?)

No: once Sam knew the truth—that he was not the original Sam, that being placed on the Moon wasn’t his choice, that his purpose was being shared out between a multitude of Sams—he couldn’t go back to his illusion. Could you?

Searching for the ground

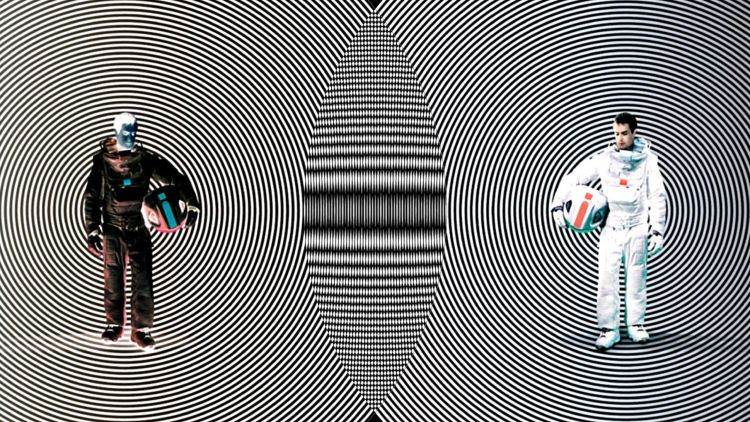

The multitude of Sam. (Sony Pictures/Courtesy Everett Collection)

Uncertainty isn’t easy to cope with, nor in its aftermath is a return to certainty—to stability—guaranteed, nor can satisfaction be guaranteed.

Ignorance might have been better than truth for Sam but the power was never his to decide: that power belonged to the despicable Lunar Industries. What they did was plainly unethical and Sam deserved more—they all did.

Like Pinocchio, like Roy Batty of Blade Runner (1982), like David of A.I. Artificial Intelligence (2001), like Ava of Ex Machina (2014), and like many more, Sam wanted reality: to be a real person of real consequence, leading a life that happened and is still happening. He vied for his own memories and for an identity to truly take ownership of. But the grounds of Sam’s existence vanished beneath him when the truth was revealed.

Sam was destined to be crushed by the very reality he sought and, in the end, he had no choice but to let go of a life that already deserted him. His purpose was rapidly deflated to nothing with a simple prick of the truth: a troubling thought for those of us who also want to follow truth to its feeble ends.