Are X-Rays Harmful? Why Scientists Cannot Say

Featured image: X-rays and other forms of radiation emissions are key to making many diagnoses. Radiation at this dose range is potentially damaging. Yet from my career as a radiation specialist I learned that this risk is unknown. We choose to err on the side of caution by pretending the risk is real. Arguably, as far as we know, it isn't. (BMA)

Radiation has a bad reputation. Its use is associated with atomic bombs, spy poisonings, nuclear catastrophes, and cellular mutations. But we’re exposed to radiation every day: from radioactive materials in Earth’s ground and atmosphere, cosmic rays from space, and from food and drink. Moreover, we’ve engineered technologies that enable us to intentionally deliver radiation to diagnose and treat bodily problems. Yet we’re still not very familiar with what radiation actually does to us.

In hospitals radiation is 'fired' into patients on purpose to image or treat them. Radiation is of particulate or electromagnetic form and can come from radioactive atoms and machines. For instance, we use alpha particles (pictured) to treat bone cancers, beta particles (electrons) to treat Graves' disease, X-rays (energetic photons) to reveal tuberculosis, and gamma rays (also energetic photons) to evaluate kidney performance. (Source)

You’d be forgiven for trepidation: enough radiation will harm you without doubt. But what about low levels of radiation, like those emissions released during X-ray exposures?

To appreciate whether X-rays, or any other form of radiation delivered at low doses, can harm us or not we must appreciate some philosophy of science. Despite the armies of specialists employed to advise on the use of radiation in hospitals around the world, there is, quite unsatisfyingly, no clear answer. Might, then, low-dose radiation even be good for us? It’s possible…

Radiation

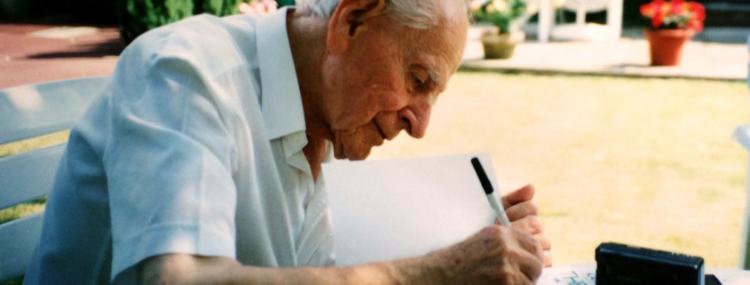

Marie Curie, the mother of radioactivity. A Polish-French national, Curie won Nobel Prizes in physics and chemistry, despite being discriminated for being a woman and a foreigner, making her the first person in history to win two. Curie posited that rays emanate from certain kinds of atoms (radioactive atoms) in her theory of 'radioactivity'. She was right. Her work had a profound effect on healthcare, for radioactivity can be used to image and treat many kinds of bodily conditions. Curie died in 1934 due to a blood disease which is caused by too much radiation exposure, an effect unknown at the time. Yet, still, there is much we don't understand about the effects of radiation. (Getty Images)

I must be clear that, in this article, I have discussed ionising radiation: the kind of radiation that has enough energy to give you a radiation dose by separating the electrons from the atoms of your cells, not the kind of radiation that you use to heat your food or change the TV channel with. (Microwave and infrared radiation wavelengths are on the non-ionising region of the electromagnetic spectrum. They may or may not be able to harm you in different ways.)

When ionising radiation was first discovered it was used rather frivolously. Wilhelm Röntgen, the discoverer of X-rays and Nobel Laureate, showed the world that X-rays (relatively energetic photons) can be used to trace patients’ bones by travelling through patients’ tissues and being detected on the other side. He started with the bones of his wife’s hand.

Through the routine use of radiation we can now acquire millimetre-resolution images. But, as you have awaited CT scans, which require X-ray exposures through your body, or when you've had X-ray machines pointing at your face at the dentist, have you ever been curious enough as to wonder whether the radiation will eventually be harmful to you? (Wikipedia)

After Marie Curie and her husband Pierre Curie discovered radioactive radium in 1898 it was put into toothpaste and cosmetics and on walls and toys. Their discoveries excited people and suppliers were keen to catch the wave. They did so without knowing how radiation would affect people. However, over a century later, we can’t really claim to understand the risks. Some scientists—such as physicians at Radon Palace spa—even claim that radiation exposure can be beneficial for our health.

The atomic bombs

The population of Nagasaki awaits the fallout from the atomic bomb cloud of 9th August 1945. Tens of thousands of people died that year (around a quarter of the city's population). Soon after its detonation the bomb's blast, heat, and radiation killed many people around its hypocentre. Radiation, then, can clearly be devastatingly harmful. But no one is denying that. What some people question is whether low-dose exposures, such as those people relatively far away from Nagasaki received that day, are harmful. No one can be sure how many people died of cancers in the proceeding decades. (The Conversation)

Let me tread carefully here: radiation can harm you. A sufficiently powerful event will almost certainly harm you. For instance, really high doses will probably give you acute radiation syndrome, which can be fatal. At slightly lower doses one can expect hair loss, skin burning, and nausea to occur. However, what I’m scrutinising here is the relationship between low levels of ionising radiation exposure and the long-term effects on our health. At this dose range the biggest risk factor is cancer. The question then becomes: how much radiation will give you cancer?

The events of the atomic bombs in Hiroshima and Nagasaki, Japan, of 1945 provided us with some useful radiobiological data, as tragic as the events were. From theoretical estimates of radiation doses received by members of the public and with knowledge of incidences of cancer in the local populations we were provided with a real-life measure of radiation risk.

The problem was—and still is—that the investigation into the effects of the Hiroshima and Nagasaki bomb did not represent a controlled experiment (assuming accurate control experiments are even theoretically possible): doses to members of the public obviously have to be crudely estimated and available data are particularly scarce at low doses. This void of knowledge has left room for all kinds of scientific theories to be candidates of what actually happens when radiation enters human bodies.

Is radiation good for you? Well …

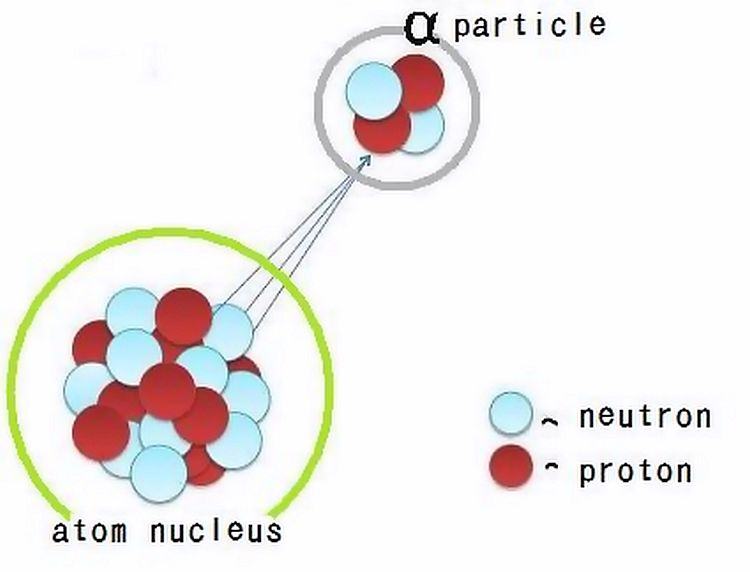

Radiation is invisible but are the effects of low-dose radiation exposure observable? The vertical axis is a scale of radiation risk (e.g. cancer) and the horizontal axis is how much radiation dose someone receives. The dashed lines visually represent four different ways to describe risk changes with radiation dose. Good enough predictions can be made at high doses (where the black bars are) but there is a shortage of data at low doses, such as those received during X-ray examinations. The lack of consensus is the source of differences in modelling the risk and of great debate. (TU Delft)

Radiation tends to strike fear in people, which is understandable given that ionising radiation can be harmful in many ways. But we should be used to risk. We leave our houses every day knowing that all kinds of things could happen. God, something adverse could happen if we stayed inside our houses. Nonetheless, we accept the benefits of being able to go outside because we deem them to outweigh the risks. Radiation is treated the same way: there is a small risk to being exposed to it but there is a medical benefit to doing so. Still, we might feel, this possibility justifies our fears of it.

The most common way of expressing the danger of ionising radiation is through the likelihood of cancer beginning to form after radiation exposure. The unknowns we face, however, are how much exposure will cause cancer and the relationship between cancer likelihood and radiation exposure.

Cancer incidence has a seemingly random component to it too. That’s not to say the chances of you developing cancer because of radiation are completely random: it’s just that, at low doses, there is some probability that you will.

To appreciate your chances faithfully you have to have evidence of an accurate model. The most-intuitive thing to do for many people is to assume the straight-line relationship (a ‘linear’ model), where every increase in radiation dose is associated with a proportionately greater risk in cancer (red dashed line above). Most governments produce radiation limits people can receive based on this kind of model. The UK, for example, uses this model to assume that low-dose radiation exposures are harmful. As such, a classified radiation worker is only legally allowed to receive 20 mSv (millisieverts) in one year, which is equivalent to around two CT scans. The added risk of receiving this amount, as predicted by one linear model, is approximately 0.01 %, when, over their lifetimes, they have a 50-% chance of developing cancer.

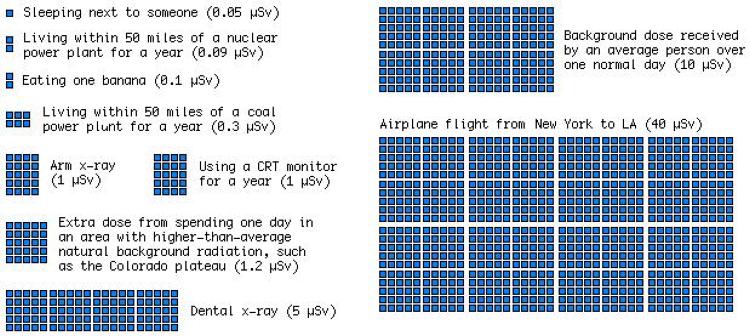

A useful display of expected radiation doses in everyday situations (expressed in units of microsieverts). But what about the risk? (xkcd)

But for the low dose range there simply isn’t an evidence base to be sure of the probabilities quoted. Historically, we’ve had to rely on animal and cellular studies, computer models, and retrospectively acquired data from uncontrolled studies, all of which have their own flaws.

In fact, if you believe in some models, benefits from radiation exposure should be expected (green dashed line). The large uncertainties calculated (black bars) actually cover the negative part of the vertical axis. Negative risk is equivalent to benefit! Many scientists adopt this position in earnest and lament those who do not. This is a debate I have written about before and received criticism for from such scientists.

Philosophy of science

Karl Popper: 'The best we can say of a hypothesis is that up to now it has been able to show its worth, and that it has been more successful than other hypotheses.' (Adam Chmielewski)

The possibility of radiation being good for you may sound like a mathematical quirk but it’s a real possibility insofar as we trust the scientific method, which asks us to make hypotheses and test them with data. If we are to be good scientists, we should believe what mathematics expresses about our data: mathematics is the language of science. We can’t pick and choose things based on our intuitions. We already accept many other counterintuitive observations as scientific knowledge, such as the exchange of virtual particles in particle physics and the imaginary representation of time in relativity and quantum mechanics and electrical engineering.

On the other hand, one might claim, everything we seem to know about radiation at high doses speaks of adverse effects. Surely, then, this claim would be true of low-dose radiation exposures too? No—not in terms of the scientific method. Our scientific knowledge stops being steadfast in this region; and without more experimental data to verify our hypotheses, we can only make conjectures about the physical properties of the Universe—in this case, by extrapolating from the atomic-bomb region to the X-ray region of our radiation risk graph. Failing the supply of more data to attest our hypotheses, there is no good reason to presuppose that we understand the risk from radiation down to no dose insofar as we trust science.

What’s worse is that it might be true that we cannot claim any scientific truths. Well-respected philosopher of science Karl Popper claimed that scientific theories, be they about the sky or radiation, cannot be inducted: that is, we cannot prove them, only disprove them. (Scientists reading this might be familiar with p-values, which are used as tools of falsification for disproving null hypotheses for this exact purpose.) In the case of radiobiology, whilst neither a linear model nor radiation being good for you have been disproved, both are still candidates.

Will the sky be blue tomorrow? While I believe that it will, I accept that the scientific method can't show me why. 'But,' you might claim, 'it will because light will scatter off particles in the sky's atmosphere just like it usually does' However, although we have deduced the phenomenon of Rayleigh scattering, this deduction is based on prior data and says nothing of tomorrow's facts. According to philosopher David Hume's 'problem of induction', there is no proof today that can serve as proof tomorrow. As absurd as this all seems to our personal intuitions, given our daily experiences of the sky, with one simple example we have reached the boundaries of the scientific method. (Source)

So what does this all mean? It means that our assumption that radiation is harmful at low doses is made on scientifically shaky grounds.

It would be reasonable to write cautiousness into our legislation and use as little radiation as ‘reasonably practicable’ in hospitals—as we do now—if the evidence base was modest and there were no costs to doing so. But there are social costs to consider: costs to patients being frightened of something with unknown consequences; to patients given radioactivity for therapy being told that they can’t sleep in the same bed as their partners or see their young children or friends for days; to a ‘radiophobic’ culture that encourages sub-optimal imaging that potentially fails to capture otherwise-detectable diseases. Moreover, there are financial costs to consider that arise from employing people, like me, apparently radiation safety experts, and from purchasing protective equipment, often using taxpayers’ money, all of which carries an opportunity cost to society (what else could we do with that money?).

Meanwhile, in the world of nuclear energy, official figures show that 1,000 deaths have been associated with the evacuation of Fukushima power plant following the accident at the Daiichi plant. This figure pales into insignificance when compared to the risks associated with people staying at home and receiving relatively low-level radiation exposures.

Still, there will be dire consequences if low-level radiation exposure is, indeed, bad for us—and who will be responsible for it?

In conclusion

If you asked me what I thought about this debate, I’d say that the current consensus prohibits seeking answers and favours caution. But who will be accountable to the costs associated with causation? No one.

As a scientist, I have faith (so to speak) in the scientific method. While the scientific method only enables us to garner confidence in a hypothesis by describing a regularity of observed events more accurately than before, being more accurate has allowed us to save and improve billions of lives. As such, we require a substantial and compelling evidence base, which we should look to accrue over time.

One could make an argument that we should begin a program of clinical trials on consenting human participants. We could pay them to see if they developed cancer a decade or two after controlled radiation exposures. Thousands of people could financially benefit for every cancer developed, if the current models were approximately correct, and the answers from these trials could settle the debate and rid of the crippling uncertainty to improve healthcare.

This is arguably unethical, though: such an important, controlled study would require an enormous amount of resources to be statistically powerful enough to be satisfactory, while it poses grave risks to the ‘willing’ individuals, who may come to rue the decisions their former selves made—a story familiar to those aware of the dark history of clinical trials (e.g. from intentionally witholding syphilis treatment from African-American men during the Tuskegee syphilis experiment to testing cheap lead paint removal techniques by purposefully exposing poor children to hazardous environments wherein they could develop various neurological diseases in the Baltimore Lead Paint Study).

But most actions come with risks. Why do we let caution overwhelm us so systemically when it comes to radiation? There are significant costs at play if we’re wrong.

Paralysed by caution, will we let uncertainty rule us?

I say, whatever we choose, let’s be grounded to what we know right now, as agnostics. With whatever ethically acquired data we come by, let’s see what we can do—with rigour and with a genuine unparalysed passion to rule out false premises, let’s not hide from the scientific truth.